Write Skills Like Workstations, Not Prompts

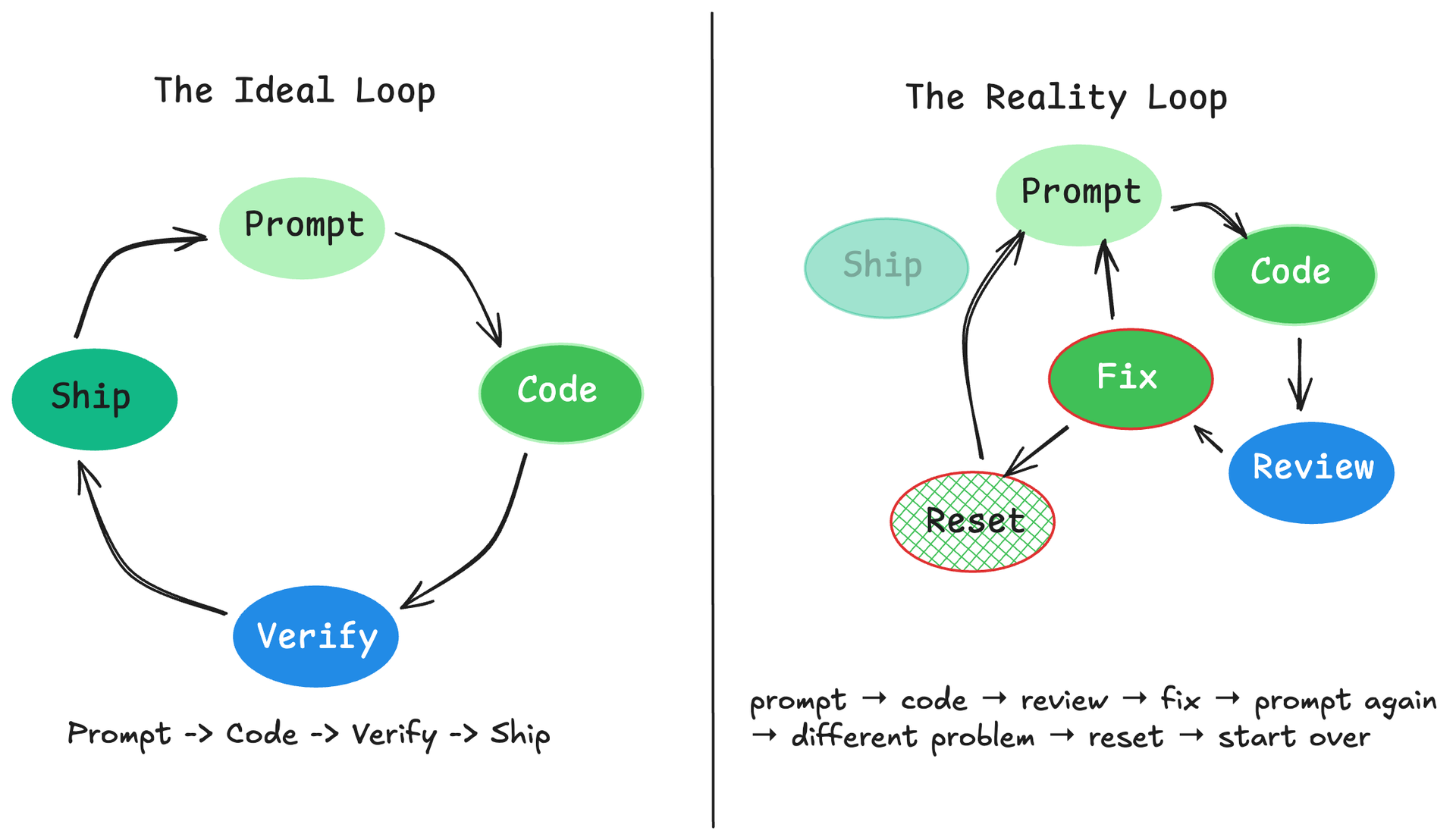

Claude Code skills work best when you treat them as workstations, not prompts: folders with scripts, gotchas, templates, and progressive disclosure that manage the agent's attention budget at runtime.

Ship Prompts Like Software: Regression Testing for LLMs

Because "it seemed fine when I tested it" is not a deployment strategy.

Part 4 of 4: Evaluation-Driven Development for LLM Systems

Four Ways to Grade an LLM (Without Going Broke)

Your evaluation technique should match the question you're asking, not your ambition.

Your Golden Dataset Is Worth More Than Your Prompts

Most teams spend weeks perfecting prompts and minutes on evaluation data. That's backwards.

Part 2 of 4: Evaluation-Driven Development for LLM Systems

Build LLM Evals You Can Trust

If five correct responses are enough to ship an LLM feature, what are you actually measuring: quality, or luck?

Part 1 of 4: Evaluation-Driven Development for LLM Systems

Claude Code Tips From the Guy Who Built It

Boris Cherny created Claude Code at Anthropic. Over three Twitter threads (early January, late January, and February 2026), he shared